Popular Articles

Elevating Data Privacy: The Compelling Reasons to Choose WireWheel

• read

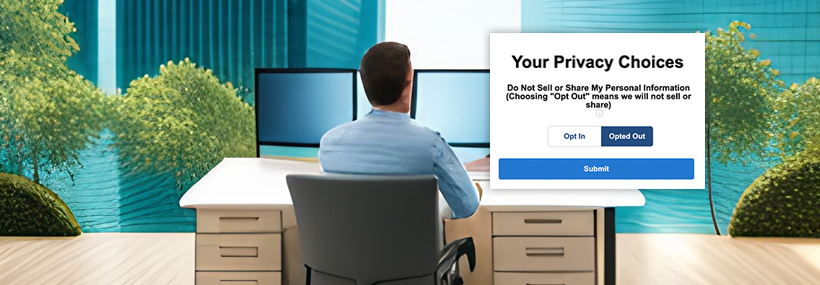

Evolving Marketing Practices: From Unsubscribing to Opting-Out – A Paradigm Shift

• read

Demystifying the Cookie Audit: A Comprehensive Guide to Optimizing Your Digital Trail

• read

Navigating the Digital Cookie Jar: Understanding First-Party vs Third-Party Cookies

• read

The Intersection of Generative AI, Data Privacy, and GDPR: Unlocking Marketing Opportunities Responsibly

• read

Personalization, ChatGPT and Privacy

• read

Effective and Ethical Use of Scripts, Tags, and Cookies on Your Websites

• read

Ultimate Guide to Preference and Consent Management, and the Global Privacy Control

• read

Three Things Marketers Need to Know with Google’s Updated Privacy Terms of Service

• read

5 Essential Things Every Marketing Leader Must Know Before July 2023 to Navigate the New Privacy Frontier

• read

When to Use Consent Management and the Importance of Consent Management

• read

Indiana Passes Comprehensive Privacy Law: What You Need to Know

• read

Analyzing the Washington State My Health My Data Act: Implications for Healthcare and Digital Marketing

• read

Iowa Passes Comprehensive Privacy Law: What You Need to Know

• read